Implementing Enterprise-Grade MLOps Infrastructure: A Step-by-Step Guide

MLOps is no longer a luxury—it’s a necessity for organizations looking to operationalize machine learning models at scale. In my experience managing AI deployments in enterprise environments, the difference between a successful MLOps rollout and a failed one often comes down to how the infrastructure is designed and automated from day one.

Below is a comprehensive, experience-backed guide on implementing MLOps infrastructure that is both scalable and production-ready.

1. Define Your MLOps Architecture

Before touching code, it’s critical to establish a reference architecture that includes:

- Data Ingestion Layer (ETL pipelines, data lakes, streaming ingestion)

- Feature Store (centralized repository for reusable ML features)

- Model Training Environment (GPU/CPU clusters, Kubernetes, or cloud ML services)

- Model Registry (version control for models)

- CI/CD Pipeline (automated training, testing, and deployment)

- Monitoring & Logging (prediction drift, resource usage, business KPIs)

Pro-tip: In production environments, I’ve found that separating training and inference infrastructure avoids resource contention and enables independent scaling.

[Architecture Diagram Placeholder: MLOps Infrastructure with Data Layer, Training Cluster, Model Registry, CI/CD, and Monitoring]

2. Step-by-Step MLOps Infrastructure Deployment

Step 1: Provision Compute & Storage

- On-prem: Use VMware vSphere or OpenStack for virtualization; integrate GPU servers (NVIDIA A100, RTX 6000) for training workloads.

- Cloud: Use managed Kubernetes services (EKS, GKE, AKS) with auto-scaling GPU node pools.

- Storage: Implement high-throughput storage (Ceph, NetApp, or AWS S3) with versioned datasets.

In my experience, using NVMe SSDs for feature store caching drastically reduces training time in iterative experiments.

Step 2: Deploy Kubernetes for Orchestration

Kubernetes is the backbone for scaling ML workloads. Install and configure:

“`bash

Install Kubernetes via kubeadm

sudo kubeadm init –pod-network-cidr=10.244.0.0/16

Install Flannel CNI

kubectl apply -f https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

“`

Configure namespace separation for different environments:

bash

kubectl create namespace ml-training

kubectl create namespace ml-inference

Step 3: Implement a Feature Store

Use Feast or Hopsworks to centralize feature engineering:

python

from feast import FeatureStore

store = FeatureStore(repo_path="feature_repo")

features = store.get_online_features(

features=["customer:age", "customer:transaction_count"],

entity_rows=[{"customer_id": 1001}]

).to_dict()

A common pitfall I’ve seen is skipping a feature store—leading to duplicated feature logic and inconsistent results between training and inference.

Step 4: Automate Model Training & Deployment

Use Kubeflow Pipelines or MLflow with CI/CD integration:

“`yaml

GitHub Actions CI/CD for ML model deployment

name: mlops-deploy

on:

push:

branches:

– main

jobs:

deploy:

runs-on: ubuntu-latest

steps:

– uses: actions/checkout@v3

– name: Train Model

run: python train.py

– name: Register Model

run: mlflow register –model-uri ./model –name my_model

– name: Deploy to Kubernetes

run: kubectl apply -f inference-deployment.yaml

“`

Step 5: Integrate Model Registry

MLflow’s Model Registry allows version control and stage transitions:

bash

mlflow models transition --name my_model --version 3 --stage Production

In my experience, enforcing registry usage prevents accidental overwrites and ensures reproducibility.

Step 6: Monitoring & Alerting

Deploy Prometheus and Grafana for resource monitoring, plus Evidently AI for data drift detection:

bash

kubectl apply -f prometheus-deployment.yaml

kubectl apply -f grafana-deployment.yaml

For drift detection:

python

from evidently import ColumnDrift

drift_detector = ColumnDrift()

drift_report = drift_detector.run(reference_data, current_data)

3. Best Practices from Real-World Deployments

- Separate Dev/Test/Prod Environments – Avoid testing experimental models in production clusters.

- GPU Quotas in Kubernetes – Prevent rogue jobs from consuming all GPUs.

- Immutable Model Artifacts – Store models with unique hashes; never overwrite files.

- Infrastructure as Code (IaC) – Use Terraform or Ansible for repeatable deployments.

- Automated Rollback – Always have rollback scripts for failed deployments.

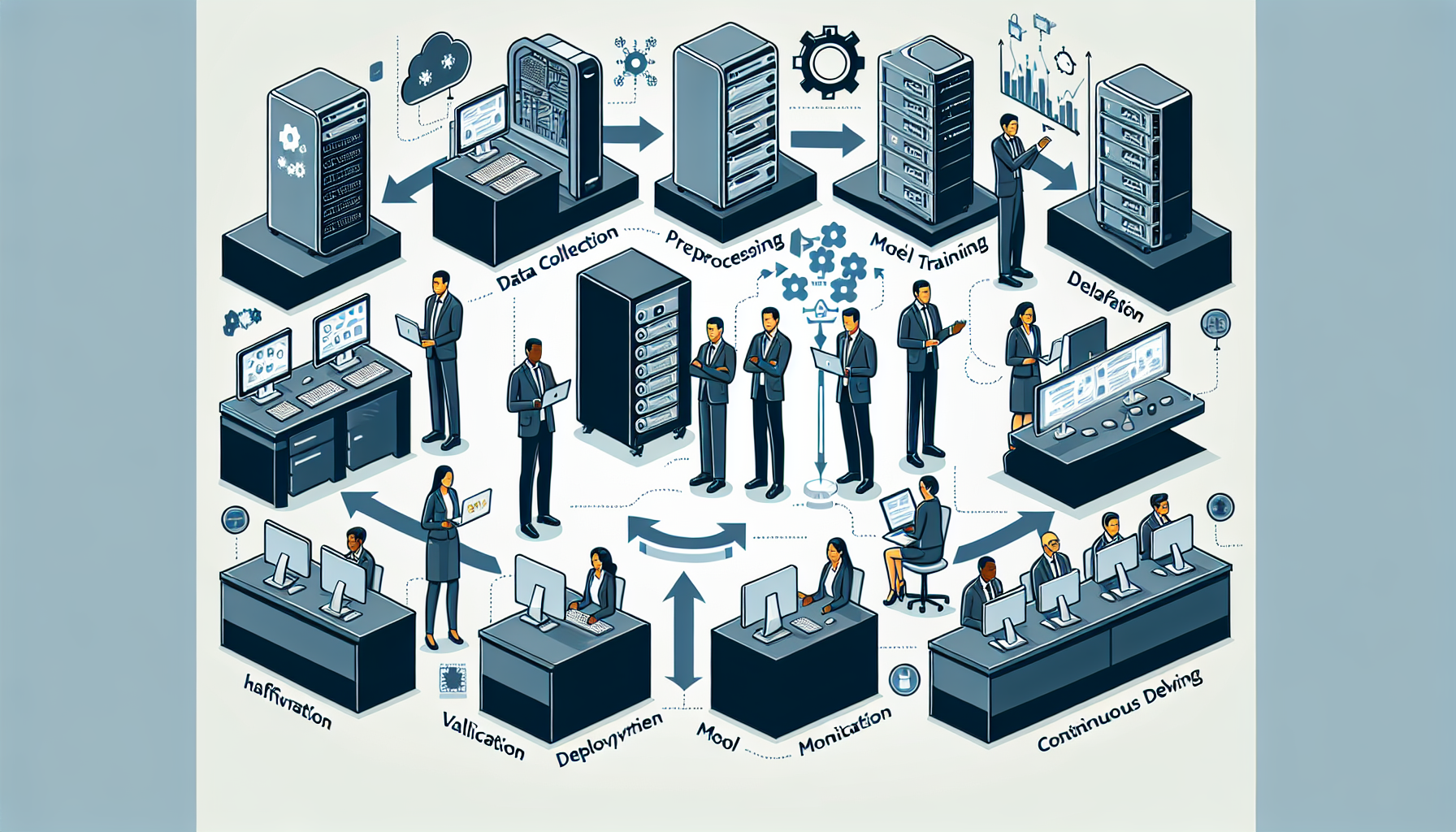

4. Example MLOps Deployment Workflow

[Workflow Diagram Placeholder: Data ingestion → Feature store → Training pipeline → Model registry → CI/CD → Inference service → Monitoring]

Conclusion

Implementing MLOps infrastructure requires more than just tools—it’s about designing for scale, automation, and reproducibility. In my experience, organizations that invest in a robust MLOps foundation see faster deployment cycles, fewer production incidents, and higher model ROI.

By following this step-by-step guide, you can build a production-ready MLOps infrastructure capable of handling enterprise-level AI workloads.

Next Step: Deploy your training cluster with GPU auto-scaling and integrate model drift monitoring before onboarding your first production model. This will save countless troubleshooting hours down the line.

Ali YAZICI is a Senior IT Infrastructure Manager with 15+ years of enterprise experience. While a recognized expert in datacenter architecture, multi-cloud environments, storage, and advanced data protection and Commvault automation , his current focus is on next-generation datacenter technologies, including NVIDIA GPU architecture, high-performance server virtualization, and implementing AI-driven tools. He shares his practical, hands-on experience and combination of his personal field notes and “Expert-Driven AI.” he use AI tools as an assistant to structure drafts, which he then heavily edit, fact-check, and infuse with my own practical experience, original screenshots , and “in-the-trenches” insights that only a human expert can provide.

If you found this content valuable, [support this ad-free work with a coffee]. Connect with him on [LinkedIn].