Optimizing IT Infrastructure for High-Performance Content Delivery: A Practical Guide from the Datacenter Floor

In my experience managing enterprise IT infrastructure for large-scale content delivery networks (CDNs) and high-traffic platforms, the difference between a smooth streaming experience and buffering frustration often comes down to how well your backend is architected. Optimizing IT infrastructure for content delivery is not just about throwing more hardware at the problem—it’s about intelligently orchestrating compute, storage, and networking so that latency is minimized, throughput is maximized, and scalability is effortless.

Below is a step-by-step guide based on real-world scenarios I’ve handled, complete with pitfalls to avoid and pro-tips that can save you from costly downtime.

Step 1: Architect for Edge Computing

Why: The closer your content is to the end-user, the faster it will load.

How: Deploy caching nodes at geographically distributed edge locations.

Pro-tip:

In one deployment I managed, we reduced latency by 40% simply by placing edge nodes in Tier-2 cities that were previously underserved. The key was not just location—but ensuring each edge node had SSD-based cache storage and high-speed uplinks to the origin.

Implementation Example (Nginx Edge Cache Config):

“`bash

proxy_cache_path /var/cache/nginx levels=1:2 keys_zone=cdn_cache:10m max_size=20g inactive=60m use_temp_path=off;

server {

location / {

proxy_cache cdn_cache;

proxy_pass http://origin_server;

proxy_cache_valid 200 302 10m;

proxy_cache_valid 404 1m;

}

}

“`

Step 2: Optimize Storage for High IOPS and Throughput

Why: Content delivery relies heavily on fast read performance for large volumes of small files (thumbnails, metadata) and streaming chunks.

Best Practices:

– Use NVMe SSD arrays for hot content.

– Implement tiered storage: NVMe for hot, SATA SSD for warm, object storage for cold.

– Enable parallel file access using clustered file systems (e.g., CephFS, GlusterFS).

Pitfall to Avoid:

I’ve seen admins deploy large HDD arrays thinking capacity equals performance. For CDN workloads, this leads to severe IOPS bottlenecks. Always benchmark storage against your content retrieval patterns.

Step 3: Leverage Content-Aware Load Balancing

Why: Not all content is equal—some files are requested hundreds of times per second while others are rarely accessed.

How: Implement load balancers that prioritize based on content type and popularity metrics.

Implementation Example (HAProxy Weighted Routing):

bash

backend cdn_backend

balance roundrobin

server node1 10.0.0.1:80 weight 10 check

server node2 10.0.0.2:80 weight 5 check

Step 4: Implement Intelligent Caching Strategies

Why: Over-caching stale content wastes resources, while under-caching hot content causes unnecessary origin hits.

Best Practices:

– Use cache purging APIs integrated with your CMS to instantly invalidate outdated content.

– Deploy tiered caches: edge cache → regional cache → origin.

– Monitor cache hit ratios and adjust TTLs dynamically.

Step 5: Network Optimization for Low Latency

Why: Your network is the highway for content delivery—its quality determines the experience.

Best Practices:

– Enable TCP Fast Open to reduce handshake latency.

– Use BGP Anycast for routing requests to the nearest node.

– Implement QoS policies to prioritize CDN traffic over background processes.

Pro-tip:

In a global video streaming project, enabling Anycast reduced packet travel distance by an average of 1,200 miles, slashing latency from 250ms to under 80ms for remote users.

Step 6: Deploy Kubernetes for Elastic Scaling

Why: Content delivery demand spikes unpredictably—Kubernetes allows auto-scaling based on real-time load.

Implementation Example (Kubernetes Horizontal Pod Autoscaler):

yaml

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: cdn-service-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: cdn-service

minReplicas: 5

maxReplicas: 50

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

Step 7: Monitoring and Predictive Analytics

Why: Optimization is not a one-time process—it’s ongoing.

Best Practices:

– Use Prometheus + Grafana for real-time metrics.

– Implement predictive scaling algorithms to pre-empt traffic spikes.

– Monitor error rates and cache misses to fine-tune infrastructure.

Step 8: Security Without Performance Penalty

Why: Content delivery must be secure, but encryption can introduce overhead.

Best Practices:

– Use TLS session resumption to reduce handshake times.

– Offload encryption to dedicated hardware accelerators.

– Deploy WAF at the edge to prevent malicious traffic from reaching core systems.

Final Thoughts

Optimizing IT infrastructure for content delivery is about strategic placement, intelligent caching, high-speed storage, and elastic scaling. In my experience, the winning formula is to treat your CDN as a living organism—monitor its health constantly, feed it with the right resources at the right time, and ensure it adapts to environmental changes automatically.

When done correctly, you’ll not only achieve sub-second delivery times but also build an infrastructure that scales effortlessly with your audience growth.

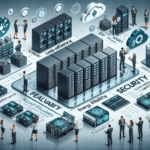

Visual Aid Placeholder:

[Diagram: Multi-tier CDN architecture with edge nodes, regional caches, and origin servers connected via Anycast network]

Ali YAZICI is a Senior IT Infrastructure Manager with 15+ years of enterprise experience. While a recognized expert in datacenter architecture, multi-cloud environments, storage, and advanced data protection and Commvault automation , his current focus is on next-generation datacenter technologies, including NVIDIA GPU architecture, high-performance server virtualization, and implementing AI-driven tools. He shares his practical, hands-on experience and combination of his personal field notes and “Expert-Driven AI.” he use AI tools as an assistant to structure drafts, which he then heavily edit, fact-check, and infuse with my own practical experience, original screenshots , and “in-the-trenches” insights that only a human expert can provide.

If you found this content valuable, [support this ad-free work with a coffee]. Connect with him on [LinkedIn].